Browse Final Year & Mini Projects

Search by category, subcategory, difficulty and keywords. Find complete ideas with documentation and code.

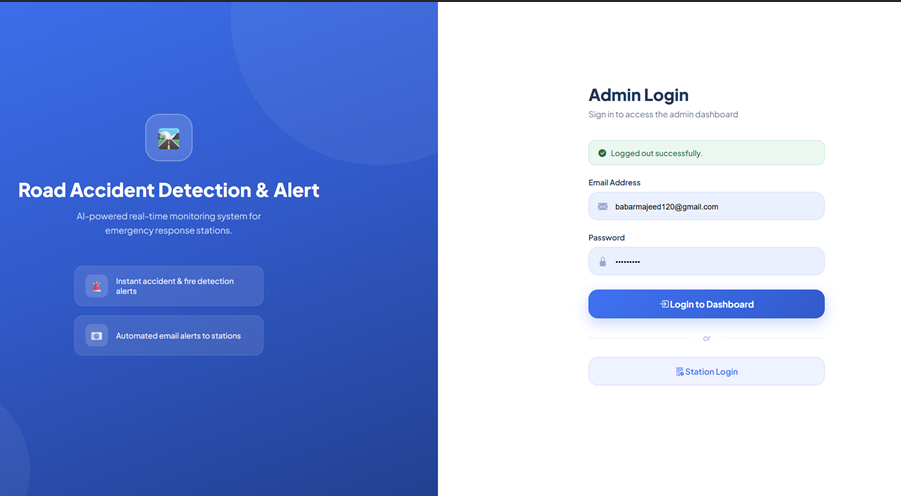

Smart Road Accident and Fire Detection & Emergency Alert System

This project presents an AI-based smart road accident and fire detection system that monitors live camera feeds and detects critical incidents in real time using the YOLOv8 model. The system is developed with a web-based dashboard using Flask, HTML, CSS, and JavaScript, while Firebase is used for real-time data storage and station management. When an accident or fire is detected, the system automatically identifies the relevant station and sends alerts through SMS, phone call, and email using ESP32 and SIM800L. The proposed solution helps reduce emergency response time, improves road safety, and provides an intelligent automated monitoring system for modern traffic environments.

Glucotwin - AI-driven Insulin Dosage Prediction System

GLUCOTWIN is an AI-driven healthcare application developed to support diabetes management in children aged 3 to 12 years. The system helps guardians by predicting insulin dosage based on important health factors such as blood pressure, body temperature, BMI, blood sugar condition, meal timing, and carbohydrate intake. The application is built using Flutter and Firebase, while a trained machine learning model processes health inputs and returns insulin predictions in real time. To improve safety and reliability, doctors can review the predicted results and provide medical recommendations. The system offers a structured, user-friendly, and intelligent platform for better child diabetes care

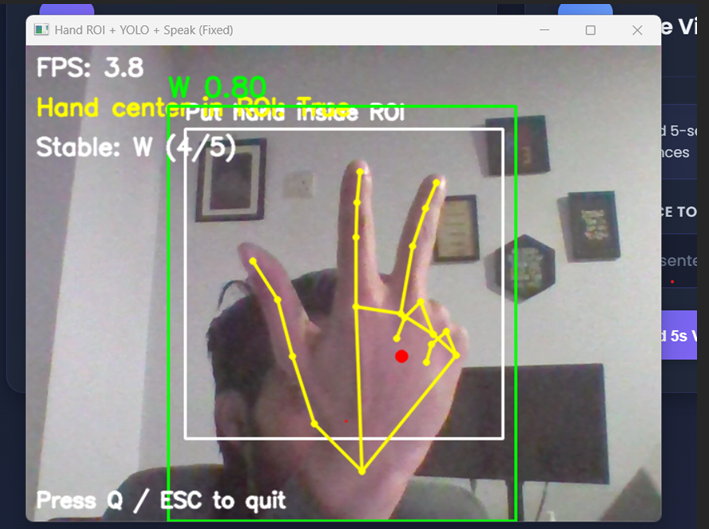

Sign Language Recognition and Translation System Using Machine Learning and Computer Vision

This project presents a smart Sign Language Recognition and Translation System designed to reduce the communication gap between sign language users and non-sign language users. The system supports two-way communication by converting text and speech into sign language and translating sign language into text and speech. It uses machine learning and computer vision techniques to recognize hand signs and gestures in real time. YOLOv8 is used for sign alphabet detection, while MediaPipe is used for hand landmark tracking and gesture analysis. The system also stores gesture patterns and hand angle data in JSON format for sentence-level recognition. A Flask-based web interface is used to provide an interactive and user-friendly experience. This project offers a practical solution for real-time communication, accessibility, and learning support.

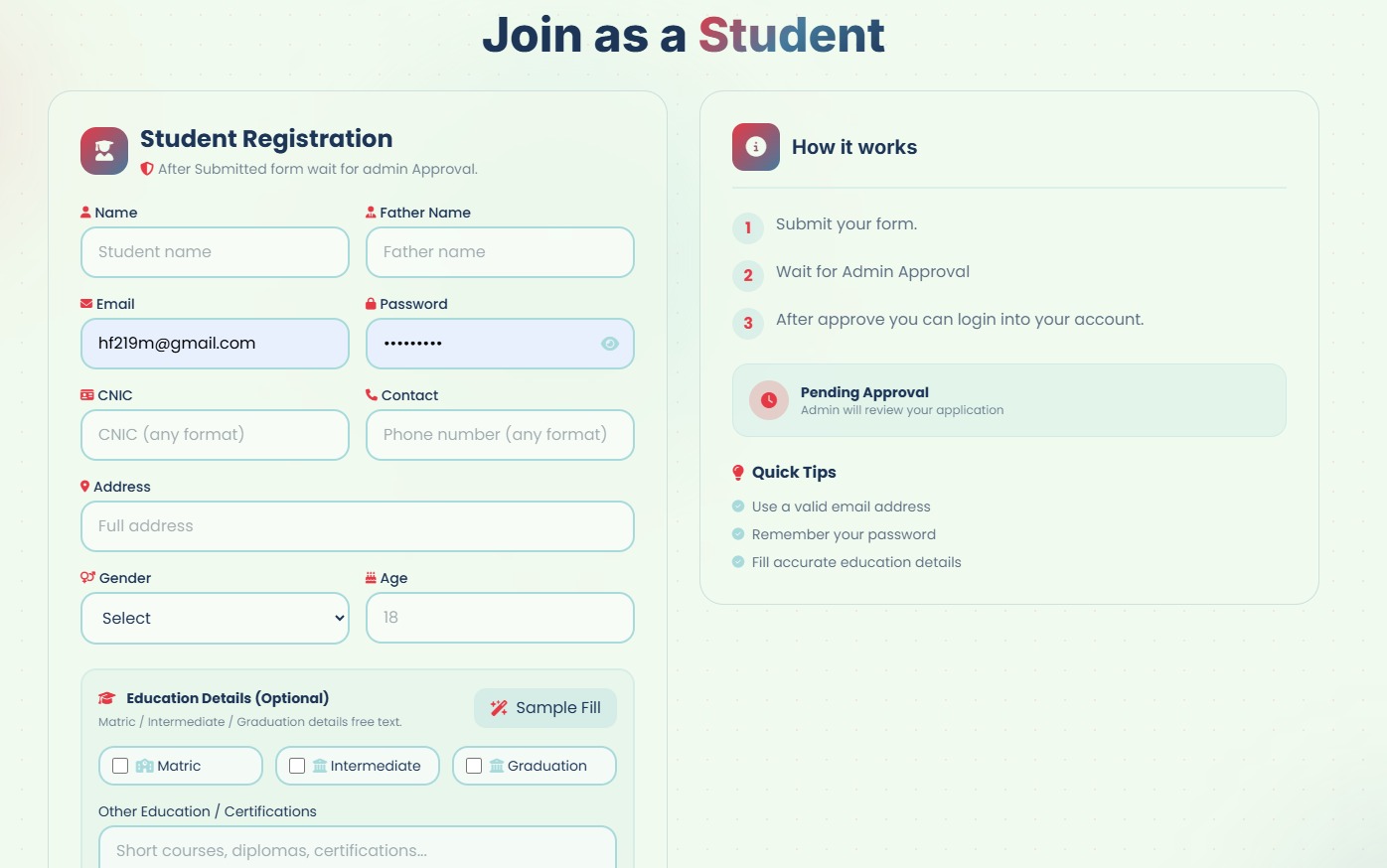

NeuroLearn – AI Powered E-Learning Platform

NeuroLearn – AI Powered E-Learning Platform